Insight

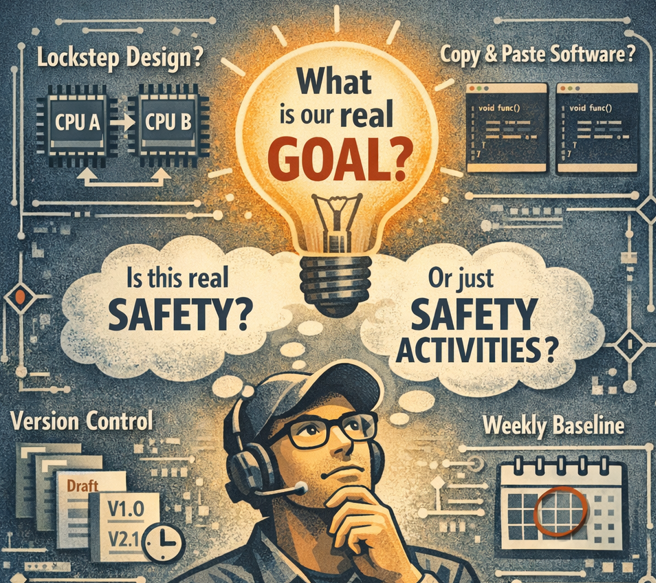

Designing Safety, or Designing Safety Activities?

Feb 22, 2025

2025-02-22 Jeonghyun Kim

Working on Automotive Functional Safety (ISO 26262), I often find myself asking a simple question: Are we really designing for safety? Or are we designing safety activities? Let me share a few real-world examples.

(Question 1) Can simulation really solve random hardware faults?

Suppose a stuck-at fault in an SRAM controller flip-flop can lead to a safety issue. What should we do? Lockstep costs too much silicon area. Maybe extensive simulation and testing will be enough? Here is the uncomfortable truth:

ISO 26262 primarily treats random hardware faults as statistical phenomena.

“Random” means:

• The root cause cannot be eliminated.

• The occurrence must be treated probabilistically.

• Engineering effort alone does not make it disappear.

A transistor becoming stuck-at can have many causes. Soft errors caused by high-energy neutrons are another example. We may understand the physics but we cannot prevent them.

From a functional safety perspective, the fundamental countermeasure to random hardware faults is redundancy.

Simulation and testing are essential but they are tools for addressing systematic faults, not for reducing the intrinsic rate of random faults. This is also why ISO 26262 fixes failure rate calculation methodologies (e.g., SN29500). The goal is not to compute a scientifically perfect failure probability.

The goal is to compare a new design against decades of proven field experience and determine whether it is sufficiently safer.

A few years ago, saying “This IP should be implemented in lockstep” often sounded excessive.

Today, if you look inside ARM Automotive Edition IPs, lockstep-based architectures are no longer optional, they are close to a baseline expectation.

The technology did not suddenly change. Our collective understanding of fault models matured.

(Question 2) If software is duplicated, is it safer?

In software architecture discussions, this often comes up: “This component has no safety mechanism. Should we just duplicate it and run it twice?”

Most software failures are systematic faults. They have a root cause. If the cause is removed, the failure does not recur.

If a small software component has been sufficiently verified and deemed free of systematic faults, what does identical redundancy actually provide?

Identical redundancy is effective against random hardware faults. Against systematic software faults, its effectiveness is essentially zero.

Historically, when MCUs and SoCs lacked strong hardware safety mechanisms, software had to compensate. Early E-GAS architectures are good examples. But as dual-core lockstep and hardware redundancy became standard, software-level redundancy has become far more selective.

The key question is not:

“Can we duplicate it?”

The real question is:

Which fault model are we addressing?

Without that clarity, redundancy may increase complexity more than safety.

(Question 3) Version control from day one — does that mean we are mature?

“We require version control from the day a document is created.” Many organizations do this. But why do we version-control in the first place? At its core, version control enables collaboration.

• What I understand

• What you understand

must be consistent.

(Whenever I think about this, cache coherence comes to mind perhaps that confirms I am still a semiconductor engineer.)

So when does version control truly become critical? When I create a private draft? Or when the document becomes shared and collaboration begins?

Supporting processes are important. But applying standard clauses literally is different from understanding their objective. Over-engineered processes may not reduce systematic fault risk they may simply consume engineering energy.

(Question 4) Weekly baselines — strong management or hidden cost?

“We create a project baseline every week.”

The primary purpose of a baseline is simple:

To create a reliable rollback point.

If configuration management is already solid, you can theoretically revert to any past state. A baseline simply makes it practical.

But baselining is not free. It often requires a temporary pause across the project.

I once experienced this in a discussion with an overseas customer.

Customer: “How often do you create baselines?”

Supplier: “Every two weeks.”

The response was unexpected:

Customer: “Then when do you actually develop what we ordered?”

In that project, the first engineering baseline was created ten months later — at the first engineering sample release. Baselines are powerful — but only when used strategically.

What we should not forget

We must always strive for safety.

But striving for safety does not mean maximizing visible safety activities.

Functional safety is not a checklist. It is an engineering discipline.

Before applying measures and methods, I believe we should always ask:

What is the goal of this activity? And which fault model is it addressing?

If we ask that first, we may achieve stronger safety with less unnecessary complexity.

And perhaps we can prevent talented functional safety engineers from burning out under process overload.